See the Platform in Action

Real screenshots from AA Regression Tester v3.0

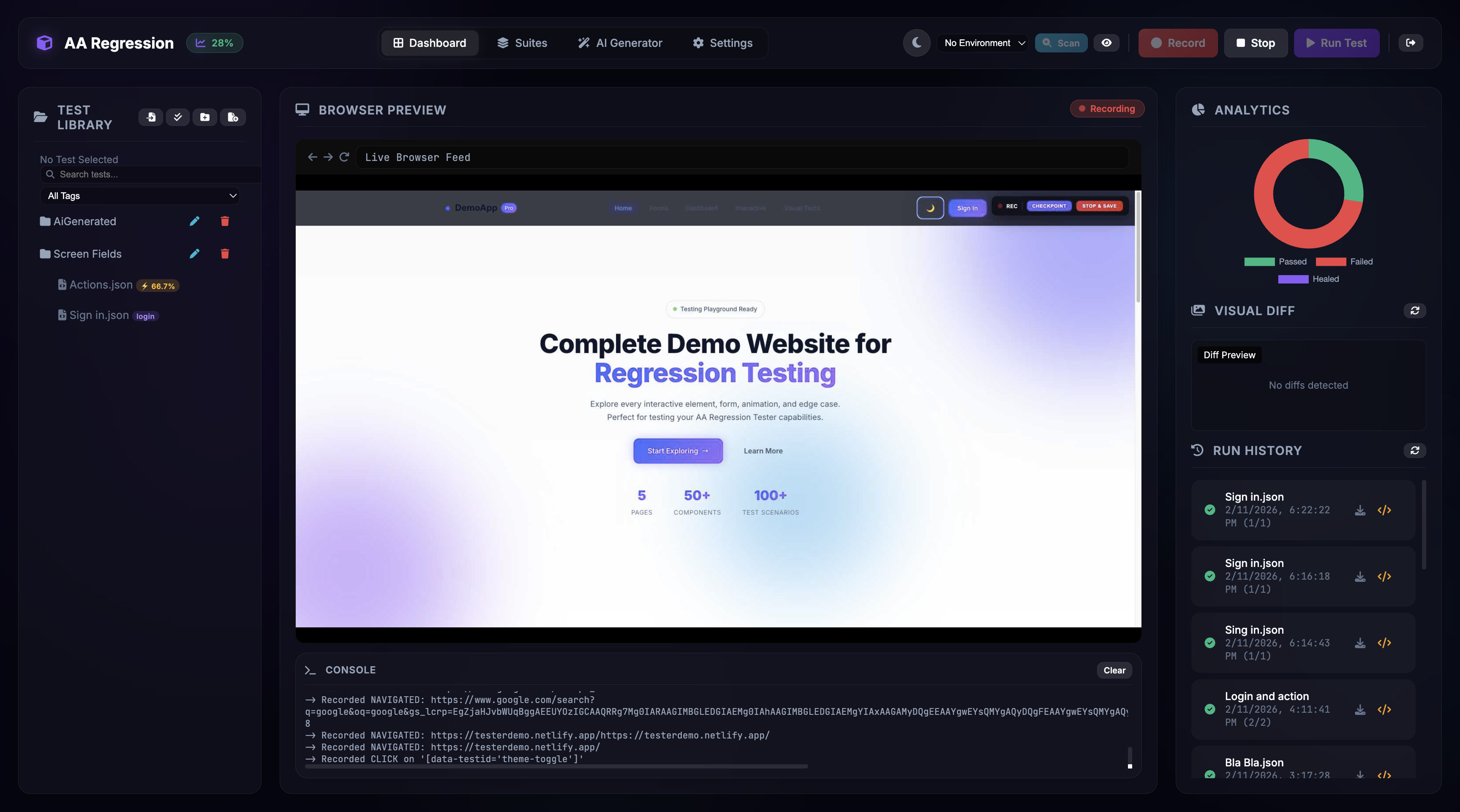

3-Column Glassmorphism Dashboard

Manage your test library with folders, tags, and search on the left. Watch live browser previews and console output in the center. Review visual diffs, run history, and analytics on the right.

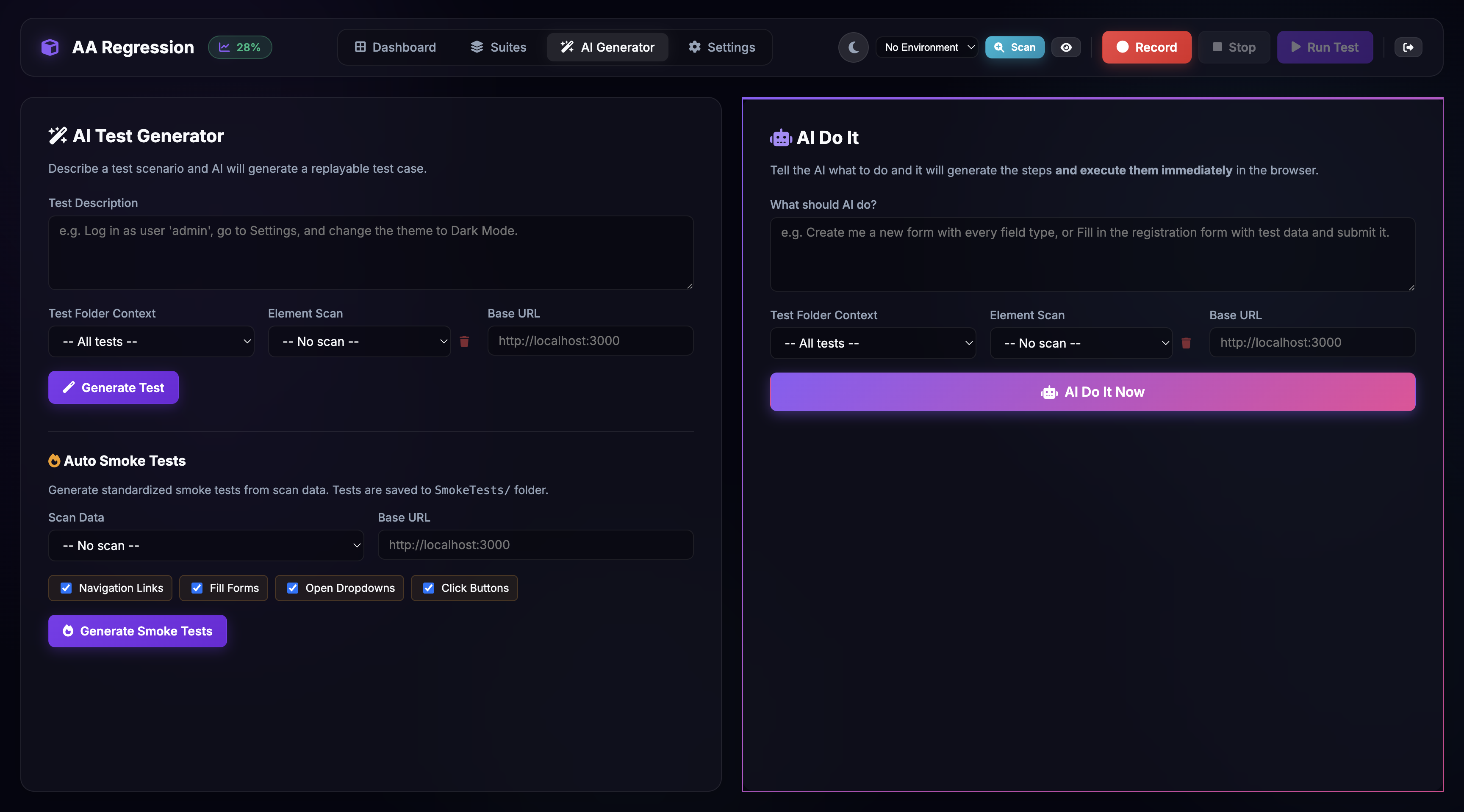

AI-Powered Test Generation

Describe what you want to test in plain English. Choose from "AI Do It" for instant generate-and-run, or use Auto Smoke Tests to create navigation, form, dropdown, and button tests automatically from your scanned elements.

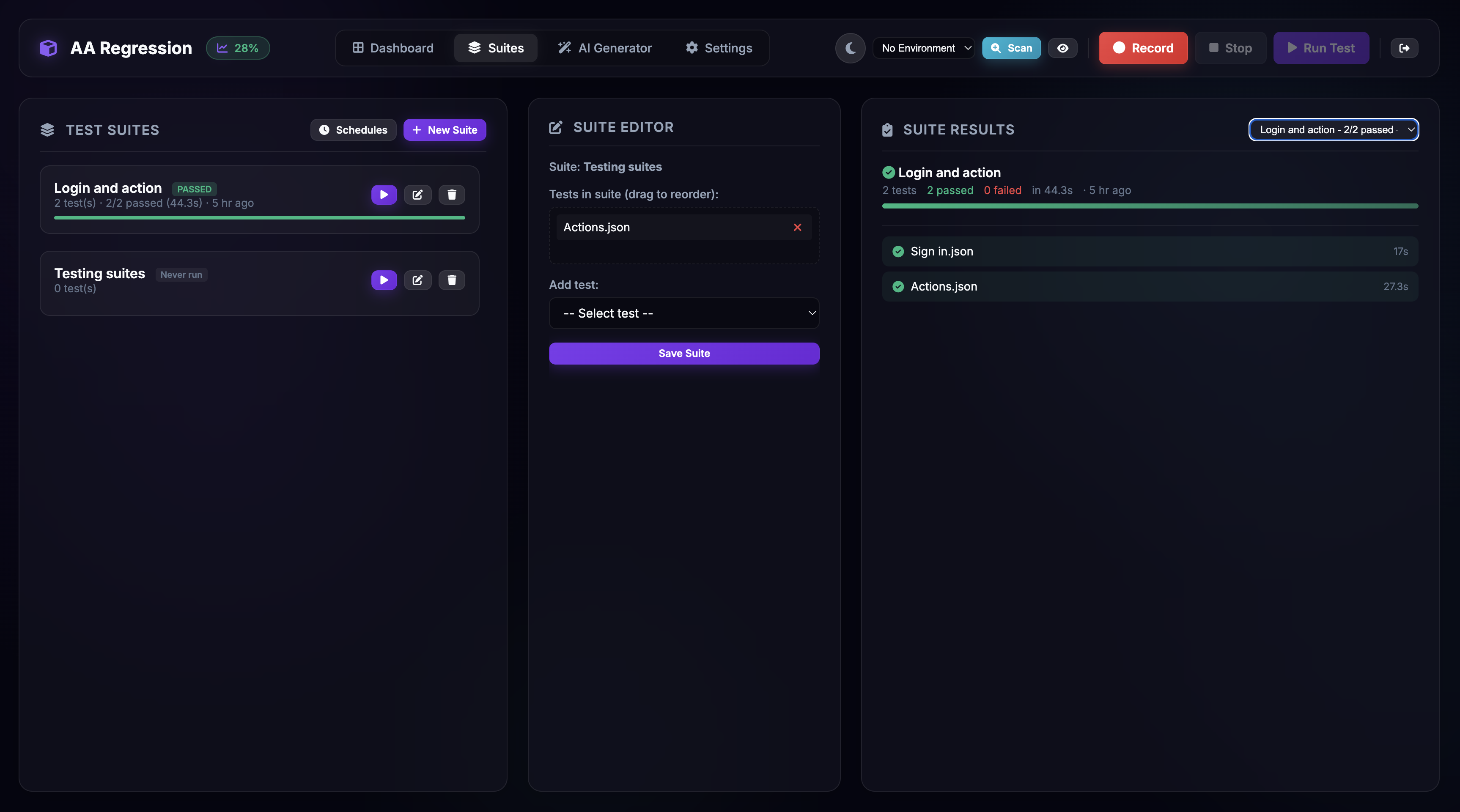

Suite Management & Scheduling

Organize tests into suites and run them sequentially or in parallel with up to 8 workers. Schedule runs with cron expressions. View live progress, pass/fail badges, duration tracking, and suite history at a glance.

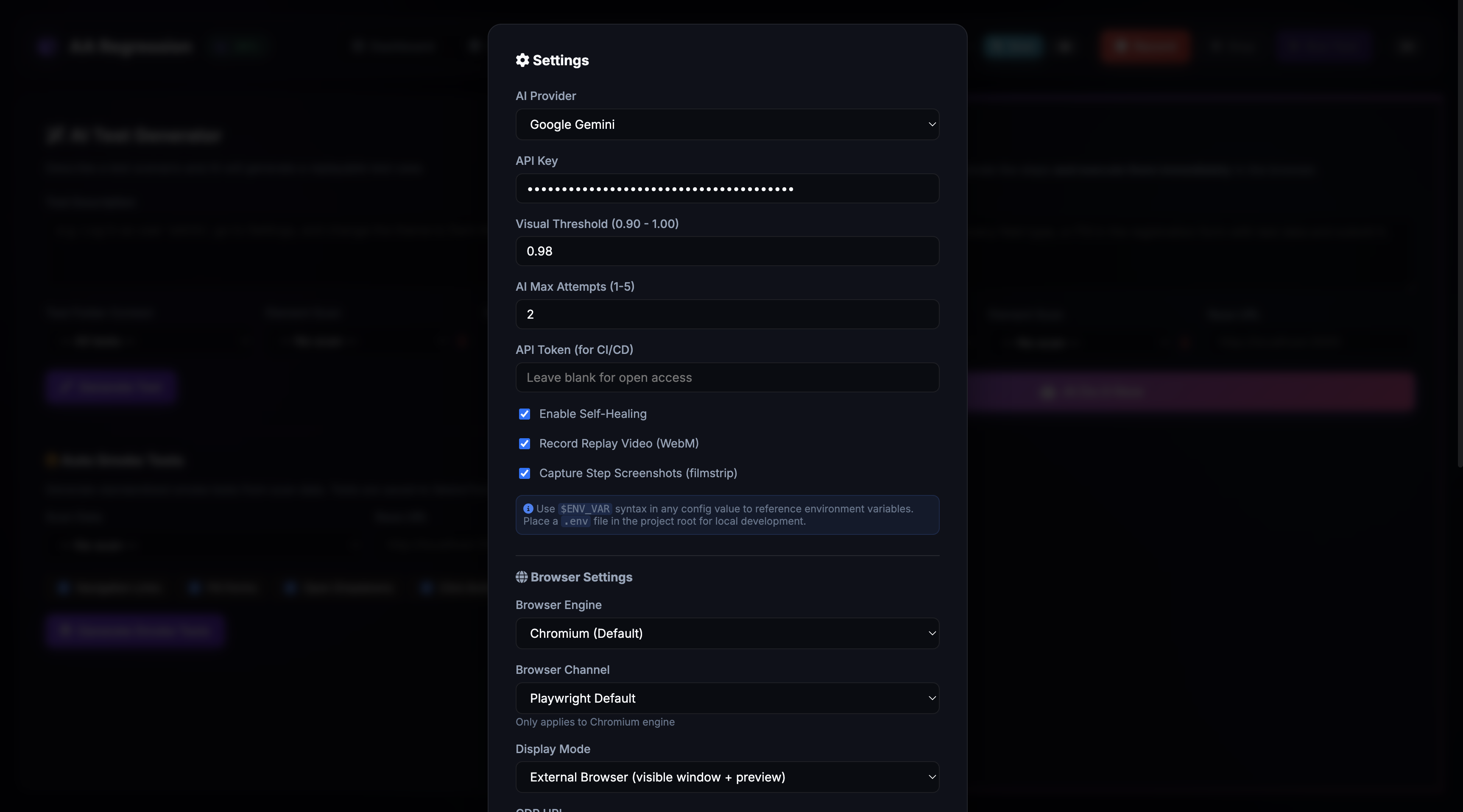

Flexible Configuration

Choose your AI provider (Gemini, OpenAI, or Claude), configure visual regression thresholds, select browser engine and display mode, set up parallel workers, and manage notification channels — all from a single settings modal.